Delphi Programming Language — Deep 2025 Guide: AI, Modernization, Migration & Career Comparison

Practical, long-form guide with hands-on examples, migration case studies, and pilot project plans — copy-paste-ready for immediate use.

Delphi Programming Language remains a focused, enterprise-compatible platform for native desktop and cross-platform applications. This guide explains the state of Delphi in 2025, practical integration patterns for AI/LLMs and Python, migration strategies, modernization steps (CI/CD, packaging, security), and pilot plans you can run right away.

Table of contents

- What the Delphi programming language is (quick recap)

- How Delphi compares to C#, Java, Python, and C++

- Practical AI & LLM integration with Delphi

- Delphi + Python: Python4Delphi (P4D) and microservice patterns

- 2025 job market & hiring demand

- Migration case studies: Delphi → .NET/Java

- Modernization: step-by-step plan

- Security & compliance checklist

- Concrete pilot projects & rollout plans

- FAQ and next steps

- Appendix — Practical links & tools

1 — What the Delphi programming language is (quick recap)

The Delphi programming language is Object Pascal packaged together with a commercial, RAD-focused IDE (RAD Studio) and a set of runtime libraries: VCL (Visual Component Library) for Windows, FMX (FireMonkey) for cross-platform GUI, RTL, and FireDAC for database connectivity.

Delphi compiles to native machine code, producing single binaries with excellent performance characteristics and a small runtime footprint. Embarcadero publishes updates and community editions; the toolchain is actively used for maintenance and modernization projects.

Key practical strengths

- Fast UI prototyping and visual form designers (RAD).

- Native performance (AOT compiled).

- Mature database tooling (FireDAC).

- Good Windows ecosystem support (VCL) and cross-platform options via FMX.

When people say “Delphi,” they usually mean the whole RAD Studio ecosystem rather than only the language syntax.

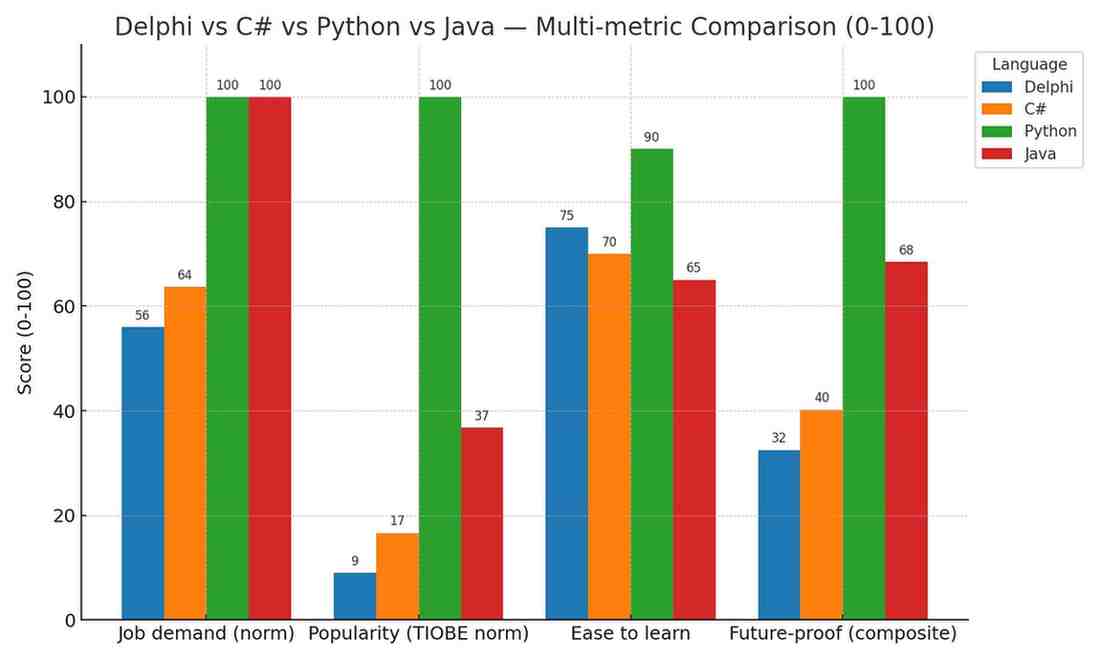

2 — Delphi vs C#, Java, Python, C++ — jobs, future-proofing, specs & performance

Below is a concise comparison oriented for technical decision-makers and developers deciding which skill to invest in or which stack to pick for a project.

Comparison table (practical view)

| Criteria | Delphi | C# (.NET) | Java | Python | C++ |

|---|---|---|---|---|---|

| Typical use cases | Legacy LOB apps, desktop utilities, ISV products | Enterprise apps, cloud, desktop, backend | Enterprise servers, Android backend | ML/AI, scripting, automation, data science | Systems, high-perf, games |

| Compilation model | Native AOT → single binary | JIT/AOT; self-contained publish | JVM bytecode, AOT options | Interpreted/JIT (CPython) | Native AOT |

| Ecosystem & libs | Smaller, niche but mature | Very large & growing | Very large | Massive (esp. AI) | Large for systems |

| Performance | Very good native performance | Very good (Core native) | Good | Lower (except C extensions) | Very high |

| Hiring market (2025) | Niche, steady for maintenance & ISVs | Broad demand across cloud & enterprise | Broad enterprise demand | Very strong for AI/ML roles | Strong for C++ systems |

| Future-proof (5–10 yrs) | Safe for existing codebases; small greenfield | Strong — Microsoft investment | Strong (enterprise) | Very strong (AI) | Strong (systems) |

Interpretation: Choose Delphi if you must ship native executables, maintain an existing Delphi codebase, or need fast desktop RAD. For cloud-first, AI, or greenfield web projects, languages like Python, C#, or Java give bigger talent pools and more modern cloud integrations. Delphi is future-proof for its targeted use cases but remains a specialized skillset.

3 — Practical AI & LLM integration with Delphi

Integrating AI into Delphi applications typically follows one of three patterns:

- Call an external LLM REST API — easiest and fastest (OpenAI, Azure, Anthropic).

- Host an internal ML microservice (Python) — centralized model hosting; client stays lightweight.

- Embed small models locally — for tiny models or on-device inference using native runtimes (less common for large LLMs).

Best practices (short)

- Avoid blocking UI threads — use asynchronous HTTP calls or background tasks.

- Never hardcode API keys in shipped binaries — use server proxies, vaults, or token exchange systems.

- Cache repeated prompts/responses to reduce cost and latency.

- Treat LLM outputs as suggestions — apply sanitization and business-rule checks.

Example: Make a non-blocking HTTP POST to an LLM endpoint (Delphi)

Sample uses TNetHTTPClient to send a JSON request and handle the response asynchronously.

uses

System.SysUtils, System.JSON, System.Net.HttpClient, System.Net.URLClient, System.Threading;

procedure TForm1.SendPromptAsync(const Prompt: string);

var

Client: TNetHTTPClient;

ReqStream: TStringStream;

JsonObj: TJSONObject;

begin

Client := TNetHTTPClient.Create(nil);

ReqStream := TStringStream.Create('', TEncoding.UTF8);

try

JsonObj := TJSONObject.Create;

try

JsonObj.AddPair('model', 'gpt-4o-mini');

JsonObj.AddPair('prompt', Prompt);

JsonObj.AddPair('max_tokens', TJSONNumber.Create(250));

ReqStream.WriteString(JsonObj.ToJSON);

finally

JsonObj.Free;

end;

Client.ContentType := 'application/json';

// In production, Client.CustomHeaders['Authorization'] := 'Bearer <token obtained securely>';

Client.PostAsync('https://api.your-llm-provider.com/v1/generate', ReqStream).Then(

procedure(const Resp: IHTTPResponse)

begin

TThread.Synchronize(nil,

procedure

begin

Memo1.Lines.Add('AI: ' + Resp.ContentAsString);

end);

end).OnError(

procedure(E: Exception)

begin

TThread.Synchronize(nil, procedure begin ShowMessage('LLM call error: ' + E.Message); end);

end);

finally

ReqStream.Free;

Client.Free;

end;

end;

Notes: Use TThread.Synchronize or similar carefully when updating the UI from callbacks. Avoid long-running work on the main UI thread.

Pattern: Delphi UI + Python microservice (recommended for heavier ML)

Host your model in a containerized Python service (FastAPI). Delphi calls the microservice via REST. Advantages: centralized compute, easier model updates, safer key management.

Minimal Python (FastAPI) example:

# fastapi_service.py

from fastapi import FastAPI

from pydantic import BaseModel

import openai

openai.api_key = "YOUR_KEY"

class Req(BaseModel):

prompt: str

app = FastAPI()

@app.post("/v1/generate")

async def generate(req: Req):

resp = openai.Completion.create(model="gpt-4o-mini", prompt=req.prompt, max_tokens=250)

return {"text": resp.choices[0].text}

Then point Delphi’s PostAsync to http://localhost:8000/v1/generate. This gives control over API keys and allows caching, batching, and supervised inputs.

4 — Delphi + Python: Python4Delphi (P4D) and when to use it

Two main options to combine Delphi and Python:

- Python4Delphi (P4D) — run Python in the same process; ideal for embedding scripting, light preprocessing, or using Python modules directly from Delphi. P4D is an established community project.

- Microservice approach — host Python separately (preferred for heavy ML). Microservices are language-agnostic, easier to scale, and keep the Delphi client lightweight.

When to choose P4D

- You need tight, in-process scripting (macros, extension plugins).

- You want to call small Python utilities without network overhead.

When to choose microservices

- Models are large or require GPU/containers.

- You need centralized logging, authentication, or reuse by other clients.

Important: P4D historically had compatibility constraints with specific Python versions; check the P4D repo and Embarcadero PythonEnvironments docs for current supported versions.

5 — 2025 job market & hiring demand for Delphi developers

Delphi jobs are fewer than mainstream languages but remain active and well-paid in certain markets (ISVs, finance, healthcare, manufacturing) where legacy Delphi codebases are mission-critical. Many roles are for maintenance, integration, modernization, or migration projects.

How to position yourself as a Delphi professional (career tactics)

- Combine Delphi with database expertise (FireDAC) and REST/API knowledge.

- Add modernization skills: unit testing, CI/CD, packaging and deployment pipelines, and cloud fundamentals.

- Add AI experience (integrations with LLMs or ML microservices) to stand out.

- Document and share migration or modernization case studies — many teams value evidence of risk-reduced migrations.

- Freelance/contract opportunities: Many organizations hire short-term specialists for modernization pilots, conversion tasks, bug triage, or packaging/signing releases.

6 — Migration case studies: Delphi → .NET/Java (what works and tradeoffs)

Why migrate?

- Broader talent pool.

- Easier cloud/modern ecosystem integration.

- Standardized toolchains and CI/CD pipelines.

Representative case: automated conversion to C#

Some migration vendors use automated toolkits followed by manual polishing and testing. Automation accelerates conversion but UI and third-party components typically need manual rework.

Tradeoffs & lessons

- Automated tools can convert logic quickly, but UI and third-party components typically need manual work.

- Incremental extraction (wrap and expose business logic as services) reduces risk and allows coexistence with the old UI.

- Full rewrite often carries the highest risk and cost — use only when necessary and with strong regression tests.

Recommended migration strategy

- Audit and classify modules by coupling and business value.

- Choose a pilot (low coupling, high value).

- Try automated conversion or service extraction on the pilot.

- Build regression tests and run parallel validation.

- Roll forward with lessons learned.

7 — Modernization: step-by-step plan (CI/CD, packaging, security, platform compatibility)

A pragmatic modernization plan focused on minimizing business risk:

Phase 0 — Inventory & assessment (1–2 weeks)

- Build a dependency list (third-party components, OS calls, DB drivers).

- Measure test coverage and create a feature map.

Phase 1 — Stabilize & version control (2–4 weeks)

- Bring code under Git (if not already).

- Automate a repeatable build (CLI dcc32/dcc64, msbuild, or RAD Studio build tools).

- Add basic unit and integration tests.

Phase 2 — CI/CD & artifacts (4–8 weeks)

- Configure CI (GitHub Actions, Azure Pipelines, GitLab CI) to trigger builds and tests. Windows runners or self-hosted Windows agents are commonly used for Delphi builds.

- Produce signed artifacts and store them in a secure artifact repository.

- Deploy to a staging environment automatically.

Phase 3 — Incremental modernization (ongoing)

- Extract core business logic into services (Delphi server or new stack).

- Replace UI or platform-specific parts iteratively.

- Add telemetry, logging, and application monitoring.

Phase 4 — Hardening & release (ongoing)

- Apply code signing, secure update mechanisms and an incident response plan.

- Maintain a vulnerability patch cadence.

CI/CICD notes: Many teams use build scripts to compile Delphi projects in CI. Document every step so builds are reproducible.

8 — Security & compliance checklist for long-lived Delphi apps

Legacy applications often contain hardcoded secrets, outdated TLS, and poor telemetry. The following checklist helps reduce security and compliance risk:

- Secrets management: Remove hardcoded keys; use a server token exchange or vault (HashiCorp Vault, Azure Key Vault).

- Transport security: Enforce TLS 1.2+ and disable insecure cipher suites.

- Input validation: Sanitize inputs server-side; use parameterized queries to avoid SQL injection.

- Authentication / Authorization: Prefer OAuth2 for APIs and role-based access controls for admin flows.

- Dependency management: Inventory third-party components and ensure patching.

- Logging & monitoring: Add structured logs, centralized log collection, and alerting.

- Data classification: Map PII/PHI and apply appropriate encryption and retention policies.

- Penetration testing & audits: Run tests against exposed surfaces (APIs, update mechanisms).

- Compliance alignment: Map controls to relevant standards (e.g., GDPR, HIPAA, PCI) and record evidence.

Following OWASP and modern secure coding practices will significantly reduce common vulnerabilities.

9 — Concrete pilot projects & rollout plans

Three practical pilot projects that provide measurable business outcomes and are suitable as initial modernization or AI integration pilots.

Pilot A — “Delphi + AI Document Summarizer” (MVP in 2–4 weeks)

- Goal: Add a document summarization feature to an existing desktop application.

- Approach: Host a small FastAPI service that calls an LLM, then call it from the Delphi UI asynchronously.

- Deliverables: FastAPI microservice, Delphi async integration, UI dialog to request/receive a summary, small test dataset.

- Why: High visible value, minimal UI changes, immediate user benefit.

Pilot B — “Reporting Module Extraction” (4–8 weeks)

- Goal: Extract a fragile reporting module into a service that can be called from multiple clients.

- Approach: Implement a REST service that encapsulates report generation, validate output against existing reports, migrate UI to call the service.

- Deliverables: REST service, updated Delphi UI hook, integration tests and comparison reports.

- Why: High business value, reduces duplication and centralizes logic.

Pilot C — “Migration Pilot to .NET (small module)” (8–16 weeks)

- Goal: Convert one mid-sized module (2–10k LOC) to C# using an automated tool + manual polish.

- Approach: Use a migration toolkit to generate C# code, then refactor and add tests. Validate against production data.

- Deliverables: Converted module, regression test suite, migration checklist and time/cost report.

- Why: Validates migration approach at reasonable risk and cost.

Resources & Further Reading

10 — FAQ and next steps

Q: What is the Delphi programming language best used for?

A: Delphi is best used for native desktop applications, internal line-of-business (LOB) tools, and situations where rapid visual UI development and native performance are priorities.

Q: Is Delphi still relevant in 2025?

A: Yes — particularly for enterprises and ISVs that have invested in Delphi applications. Embarcadero continues product updates and community editions, ensuring ongoing viability.

Q: Can Delphi be used with AI and LLMs?

A: Yes. The most practical approach is to call LLMs over REST (either directly to providers or via your own Python microservice). Community libraries exist to ease connecting Delphi to OpenAI-style APIs.

Q: Should we migrate a large Delphi app or modernize in place?

A: Usually begin with an inventory and pilot. Incremental modernization (wrap, extract services) is lower risk and often delivers business value sooner than a full rewrite. When conversion is needed, automated tools can speed up the process but require manual polishing and extensive testing.

Appendix — Practical links & tools (starting points)

- Embarcadero / RAD Studio release notes and patches (active updates through 2024–2025).

- TNetHTTPClient and modern HTTP client docs — use these for async REST calls from Delphi.

- Python4Delphi (P4D) — embed Python inside Delphi when needed.

- OpenAI client libraries for Delphi (community drivers) — useful starting point when integrating LLMs.

- Migration vendors & case studies (Ispirer) — real enterprise migration examples and automation toolkits.

Replace generic link text above with your preferred URLs when pasting into WordPress.